3. Setting minimum and maximum memory values

When SQL Server starts, it acquires enough

memory to initialize, beyond which it acquires and releases memory as

required. The minimum and maximum memory values control the upper limit

to which SQL Server will acquire memory (maximum), and the point at

which it will stop releasing memory back to the operating system

(minimum).

As you saw earlier in figure 7.1, the minimum and maximum memory values for an instance can be set in SQL Server Management Studio or by using the sp_configure

command. By default, SQL Server's minimum and maximum memory values are

0 and 2,147,483,647, respectively. The default min/max settings

essentially allow SQL Server to cooperate with Windows and other

applications by acquiring and releasing memory in conjunction with the

memory requirements of other applications.

On small systems with a single SQL Server

instance, the default memory values will probably work fine in most

cases, but on larger systems we need to give this a bit more thought.

Let's consider a number of cases where setting min/max values is

required, beginning with systems that lock pages in memory.

Lock Pages in Memory

As you know, when the Lock Pages in Memory

setting is enabled, SQL Server's memory will not be paged out to disk.

This is clearly good from a SQL Server performance point of view, but

consider a case where the maximum memory value isn't set, and SQL Server

is placed under significant load. The default memory settings don't

enforce an upper limit to the memory usage, so SQL Server will continue

to consume as much memory as it can get access to, all of which will be

locked and therefore will potentially starve other applications

(including Windows) of memory. In some cases, this memory starvation

effect can lead to system stability issues.

In SQL Server 2005 and above, even with the Lock

Pages in Memory option enabled, SQL Server will respond to memory

pressure by releasing some of its memory back to the operating system.

However, depending on the state of the server, it may not be able to

release memory quickly enough, again leading to possible stability

issues.

For the reasons just outlined, systems that use

the Lock Pages in Memory option should also set a maximum memory value,

leaving enough memory for Windows. We'll cover how much to leave

shortly.

Multiple instances

A server containing multiple SQL Server

instances needs special consideration when setting min/max memory

values. Consider a server with three instances, each of which is

configured with the default memory settings. If one of the instances

starts up and begins receiving a heavy workload, it will potentially

consume all of the available memory. When one of the other instances

starts, it will find itself with very little physical memory, and

performance will obviously suffer.

In such cases, I recommend setting the maximum

memory value of each instance to an appropriate level (based on the load

profile of each).

Shared servers

On servers in which SQL Server is sharing

resources with other applications, setting the minimum memory value

helps to prevent situations in which SQL Server struggles to receive

adequate memory. Of course, the ideal configuration is one in which the

server is dedicated to SQL Server, but this is not always the case,

unfortunately.

A commonly misunderstood aspect of the minimum

memory value is whether or not SQL Server reserves that amount of memory

when the instance starts. It doesn't.

When started, an instance consumes memory

dynamically up to the level specified in the maximum memory setting.

Depending on the load, the consumed memory may never reach the minimum

value. If it does, memory will be released back to the operating system

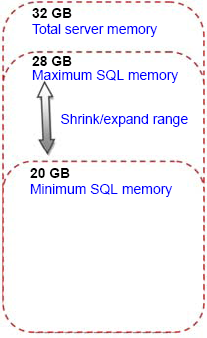

if required, but will never drop below the value specified in the minimum setting. Figure 3 shows the relationship between a server's memory capacity and SQL Server's minimum and maximum memory values.

Clusters

Configuring memory maximums in a multi-instance cluster is important in

ensuring stability during failover situations. You must ensure that the

total maximum memory values across all instances in the cluster is less

than the total available memory on any one cluster node that the

instances may end up running on during node outage.

Setting the maximum memory values in such a

manner is important to ensure adequate and consistent performance during

failover scenarios.

Amount of memory to leave Windows

One of the important memory configuration

considerations, particularly for 32-bit AWE systems and 64-bit systems

that lock pages in memory, is the amount of memory to leave Windows. For

example, in a dedicated SQL Server system with 32GB of memory, we'll

obviously want to give SQL Server as much memory as possible, but how

much can be safely allocated? Put another way, what should the maximum

memory value be set to? Let's consider what other possible components

require RAM:

Windows

Drivers for host bus adapter (HBA) cards, tape drives, and so forth

Antivirus software

Backup software

Microsoft Operations Manager (MOM) agents, or other monitoring software

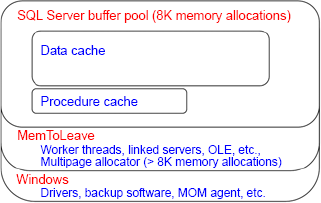

As shown in figure 4,

in addition to the above non-SQL Server components, there are a number

of SQL Server objects that use memory from outside of the buffer

pool—that is, the memory area defined by the maximum memory setting.

Memory for objects such as linked servers, extended stored procedures,

and object linking and embedding (OLE) automation objects is allocated

from an area commonly called the MemToLeave area.

As you can see, even on a dedicated server,

there's a potentially large number of components vying for memory

access, all of which comes from outside the buffer pool, so leaving

enough memory is crucial for a smooth-running server. The basic rule of

thumb when allocating maximum memory is that the total of each

instance's maximum memory values should be at least

2GB less than the total physical memory installed in the server;

however, for some systems, leaving 2GB of memory may not be enough. For

systems with 32GB of memory, a commonly used value for Max Server Memory

(totaled across all installed instances) is 28GB, leaving 4GB for the

operating system and other components that we discussed earlier.

Given the wide variety of possible system

configuration and usage, it's not possible to come up a single best

figure for the Max Server Memory value. Determining the best maximum

value for an environment is another example of the importance of a

load-testing environment configured identically to production, and an

accurate test plan that loads the system as per the expected production

load. Load testing in such an environment will satisfy expectations of

likely production performance, and offers the opportunity to test

various settings and observe the resulting performance.

One of the great things about SQL Server,

particularly the recent releases, is its self-tuning nature. Its default

settings, together with its ability to sense and adjust, make the job of a DBA somewhat easier. In the next section, we'll see how these attributes apply to CPU configuration.